Projects

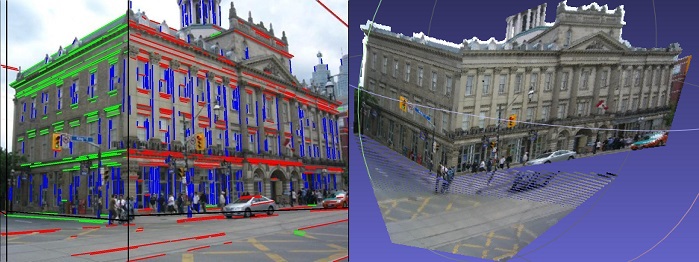

Single Image Reconstruction

This project aims to do the semantic labelling and 3D reconstruction from a single outdoor RGB image. To address this problem, we first calibrate the camera in every image from detected vanishing points. Then we parse the scene with attributed grammar and do the 3D reconstruction with the geometry constraints betweene points, lines and planes.

Collaborator:

Joey C. Yu ,

Xiaobai Liu

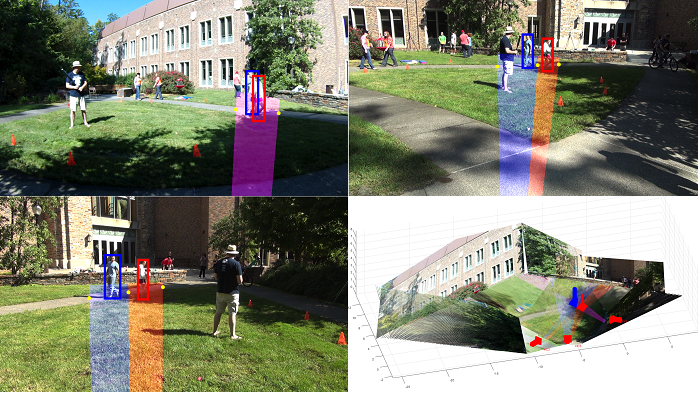

Multi-view Tracking

This project aims to address a multi-object tracking problem using a few cameras. Traditional tracking problem focuses on only single view, so the tracking id may vary when objects overlap or disappear for a while. However multiview tracking can solve above problems and increase tracking accuracy by tracking objects in a 3D background.

Collaborator:

Yuanlu Xu ,

Xiaobai Liu

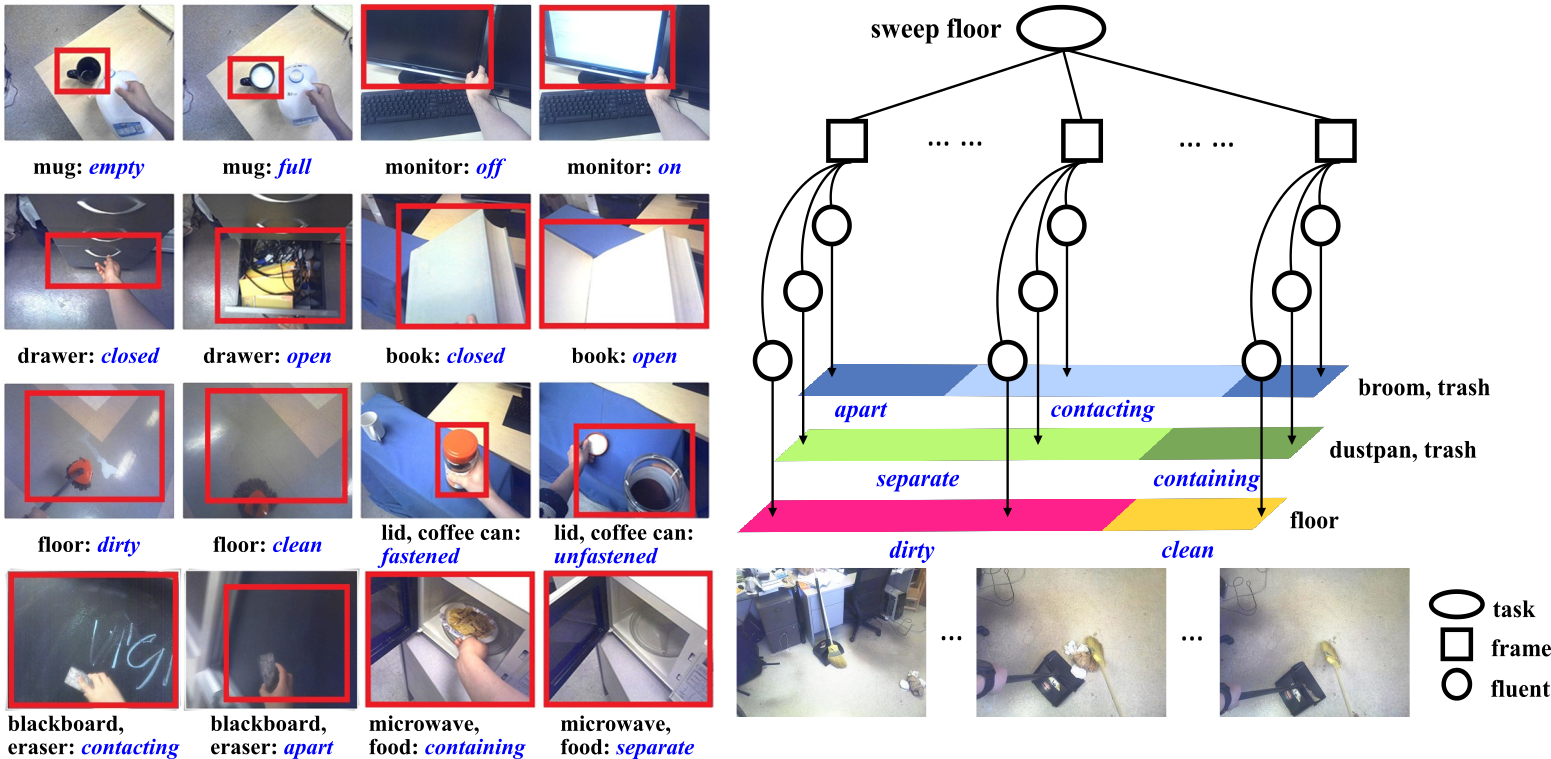

Jointly Recognition of Fluents and Tasks

This project aims to addresses the problem of jointly recognizing object fluents and tasks in egocentric videos. Fluents are the changeable attributes of objects. Tasks are goaloriented human activities which interact with objects and aim to change some attributes of the objects. The process of executing a task is a process to change the object fluents over time. We propose a hierarchical model to represent tasks as concurrent and sequential object fluents. In a task, different fluents closely interact with each other both in spatial and temporal domains. Given an egocentric video, a beam search algorithm is applied to jointly recognizing the object fluents in each frame, and the task of the entire video. We collected a large scale egocentric video dataset of tasks and fluents. This dataset contains 14 categories of tasks, 25 object classes, 21 categories of object fluents, 809 video sequences, and approximately 333,000 video frames.

Collaborator:

Ping Wei